Reinforcement Learning in the Wind

Deep reinforcement learning is an AI technique for choosing how to act in different situations repeatedly occurring over time. It has shown tremendous success in playing games, such as ATARI video games, chess, Go, and StarCraft, going as far as beating professional human players.

While games present certain interesting scientific challenges, there are many real-world problems that could benefit from deep reinforcement learning, but where it is not yet known how to do this effectively. One of such problems is active wake control in wind farms.

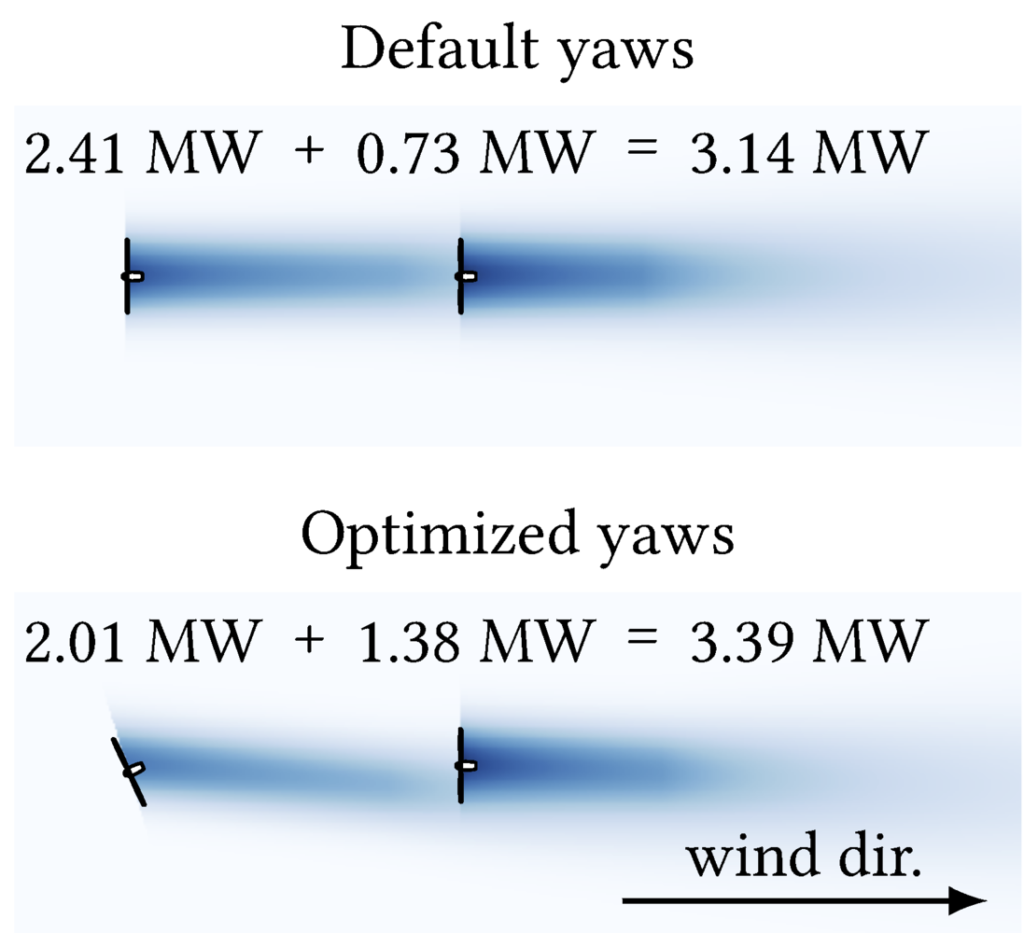

When a turbine extracts energy from the wind, it leaves behind its rotor an area of lower wind speed and higher turbulence, known as a wake. If the wake reaches another turbine, that turbine produces less power. By misaligning turbines with the wind, it is possible to redirect their wakes away from other turbines. This is known as active wake control. Research shows that in certain cases wake control can boost the power output of a wind farm by as much as 12%.

How far the wake region extends behind a wind turbine changes constantly. Therefore, as the wind direction and speed, air temperature, and other atmospheric conditions change, so does the best possible wake control strategy. As a result, the optimal course of actions is to repeatedly perform active wake control throughout the day. Which is exactly what reinforcement learning does!

And yet, the research of reinforcement learning for active wake control remains scarce. The main reason is that it requires millions of interactions with the problem in order to learn reasonable behaviors. While games can be easily simulated—making it possible to collect large amounts of data—real-world problems do not always provide sufficient data.

To bridge this gap, we have created a novel open-source wind farm simulator tailored to reinforcement learning research. It is based on an existing simulator called FLORIS, but adheres to an industry-standard reinforcement learning format called OpenAI Gym. As a result, our simulator allows users to set up new active wake control experiments with just a few lines of code, facilitating research in active wake control via reinforcement learning. For example, our own experiments revealed that the performance of deep reinforcement learning algorithms can be significantly improved just by changing the way the turbine rotations are encoded. We also found that compared to the traditional wake control method, deep reinforcement learning is more robust to measurement mistakes in the observed data, which can be especially important when working with real-world sensors. These results have been published at the AAMAS conference [1].

To learn more about the simulator, visit https://github.com/AlgTUDelft/wind-farm-env. And if you want to collaborate with me and my colleagues on active wake control via reinforcement learning, please feel free to contact us. For example, we are interested in using high-fidelity atmospheric models such as large-eddy simulations or actual field experiments to demonstrate the strengths of different reinforcement learning algorithms for wind farm control.

[1] Neustroev, G., Andringa, S. P., Verzijlbergh, R. A., & De Weerdt, M. M. (2022, May). Deep Reinforcement Learning for Active Wake Control. In Proceedings of the 21st International Conference on Autonomous Agents and Multiagent Systems (pp. 944–953).

Leave a Reply

You must be logged in to post a comment.